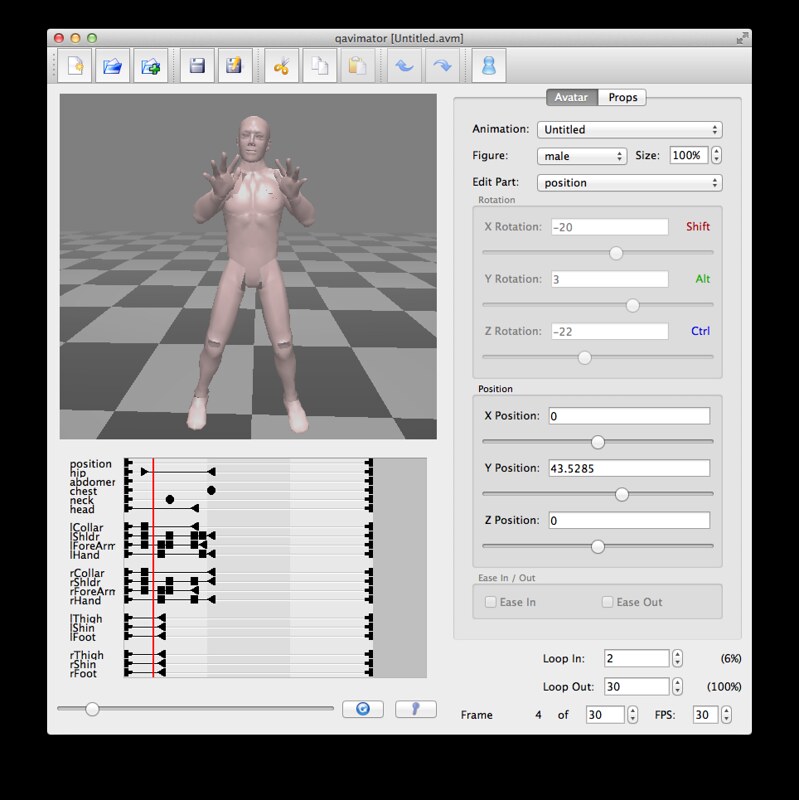

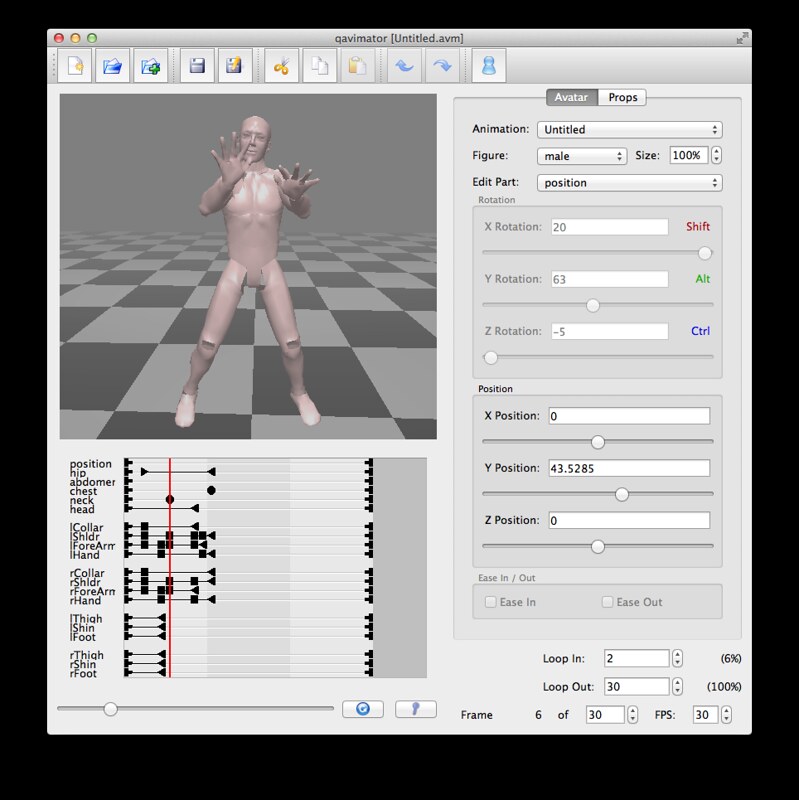

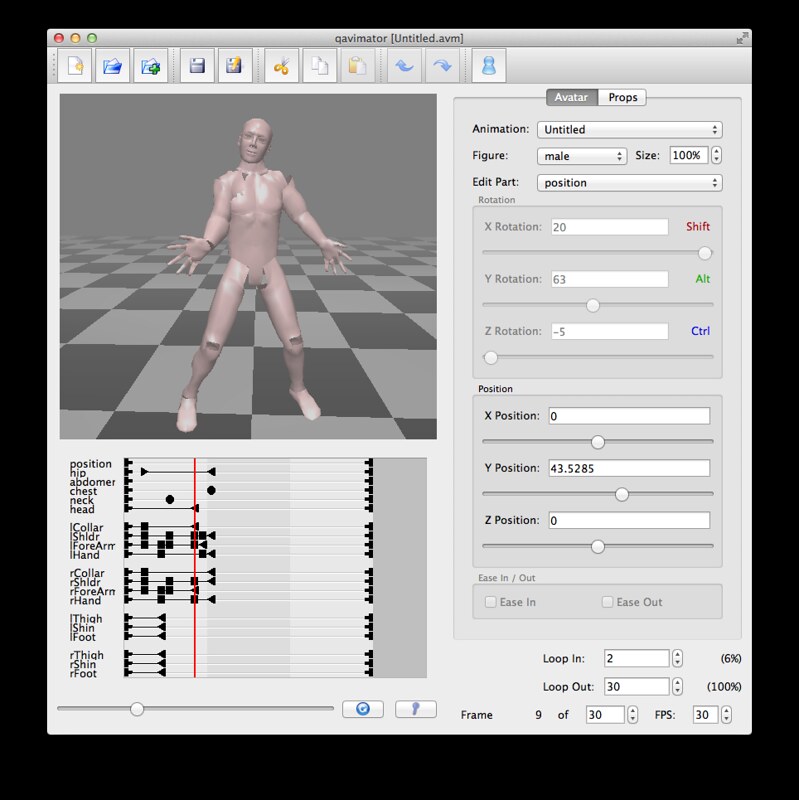

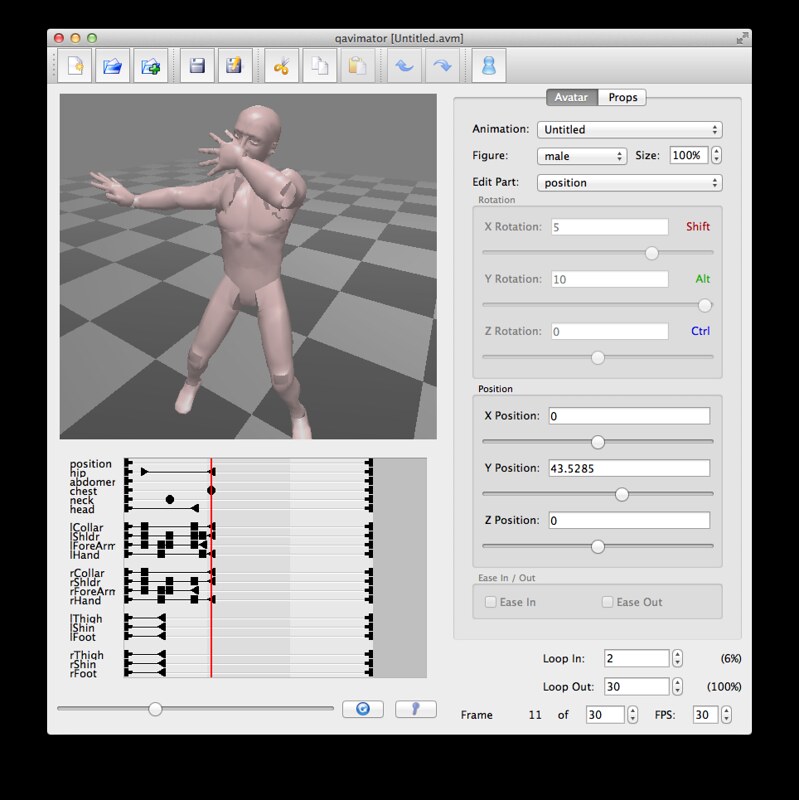

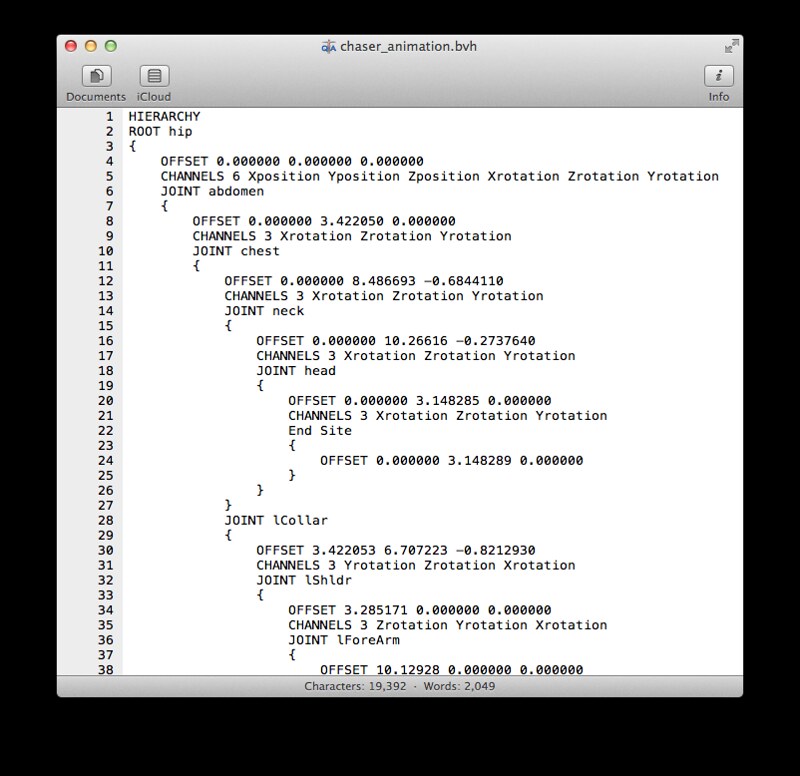

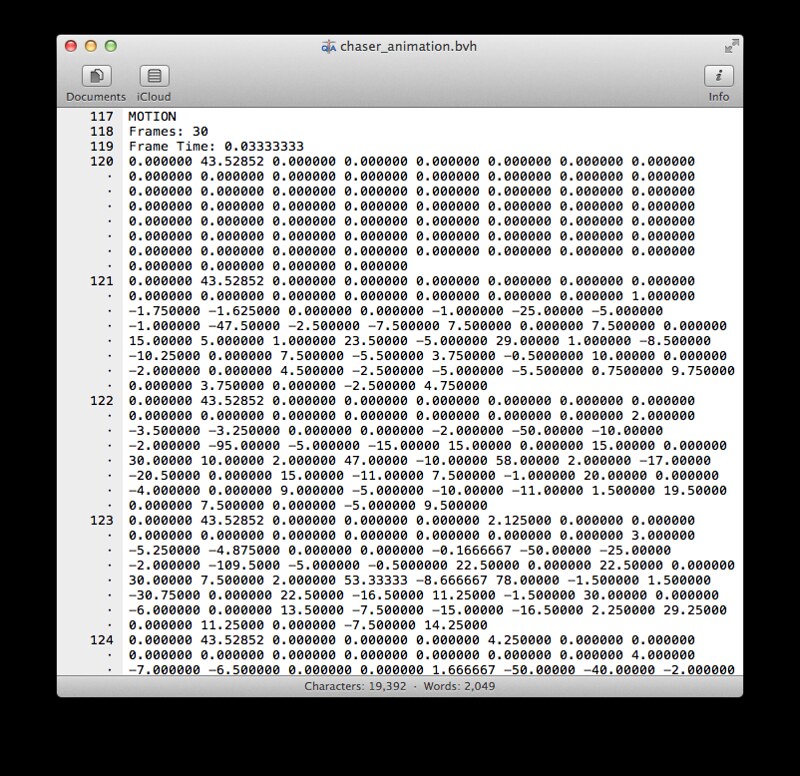

BVH stands for Biovision Hierarchy and the first half of a BVH file contains the skeleton hierarchy information, the second half is motion data.

Conclusion of this half pomodoro? Well, it works, but this is a silly way of editing BVH dance motions (like poking out individual midi notes on a screen or handtyping out a svg file) when you can obviously do motion capture with something like kinect in this day and age and generate a BVH from it. But at least now I know how a BVH file is composed! When I have more time to waste I should like to try out Brekel's Kinect 3D Scanner or another of those mocap applications.

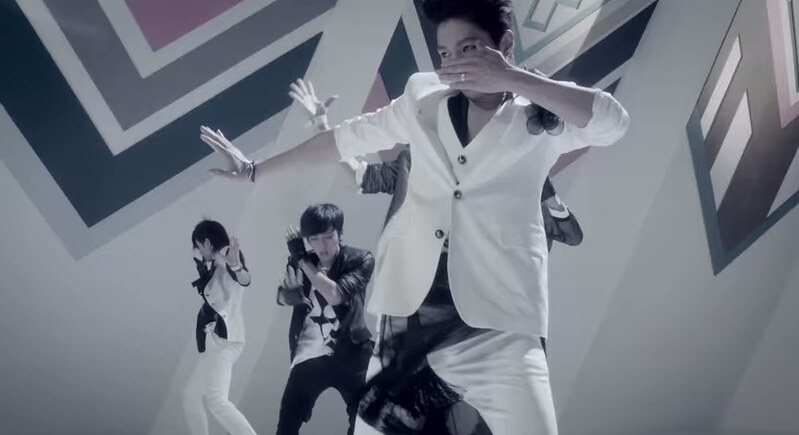

Perhaps the reason why the question came so strongly to mind was because I've been thinking about the human body as performance recently...

No comments:

Post a Comment